The biggest area of risk on any Salesforce implementation project is the data model. In my view this assertion is beyond question. The object data structures and relationships underpin everything. Design mistakes made in the declarative configuration or indeed technical components such as errant Apex Triggers, poorly executed Visualforce pages etc. are typically isolated and therefore relatively straightforward to remediate. A flawed data model will impact on every aspect of the implementation from the presentation layer through to the physical implementation of data integration flows. This translates directly to build time, build cost and the total cost of ownership. It is therefore incredibly important that time is spent ensuring the data model is efficient in terms of normalisation, robust and fit for purpose; but also to ensure that LDV is considered, business critical KPIs can be delivered via the standard reporting tools and that a viable sharing model is possible. These latter characteristics relate to the physical model, meaning the translation of the logical model into the target physical environment, i.e. Salesforce (or perhaps database.com). Taking a step back, the definition of a data model should journey through three stages; conceptual, logical and physical design. In the majority case most projects jump straight into entity relationship modelling – a logical design technique. In extreme cases the starting point is the physical model where traditional data modelling practice is abandoned in favour of a risky incremental approach with objects being identified as they are encountered in the build process. In many cases starting with a logical model can work very well and enable a thorough understanding of the data to be developed, captured and communicated before the all important transition to the physical model. In other cases, particularly where there is high complexity or low understanding of the data structures, a preceding conceptual modelling exercise can help greatly in ensuring the validity and efficiency of the logical model. The remainder of this post outlines one useful technique in performing conceptual data modelling; Object Role Modelling (ORM).

I first started using ORM a few years back on Accounting related software development projects where the data requirements were emergent in nature and the project context was of significant complexity. There was also a need to communicate early forms of the data model in simple terms and show the systematic, fact-based nature of the model composition. The ORM conceptual data model delivered precisely this capability.

ORM – What is it?

Object Role modelling is a conceptual data modelling technique based on the definition of facts in the form of natural language and intuitive diagrams. ORM models are subject to rigorous data population checks, the addition of logical constraints and iterative improvement. A key concept of ORM is the Conceptual Schema Design Procedure (CSDP), a prescriptive 7 step approach to the application of ORM, i.e. the analysis and design of data. Once the conceptual model is complete and validated, a simple algorithm can be applied to produce a logical view, i.e. a set of normalised entities (ERD) that are guaranteed to be free of redundancy. This generation of a robust logical model directly from the conceptual schema is a key benefit of the ORM technique.

Whilst many of the underlying principles have existed in various forms since the 1970s, ORM as described here was first formalised by Dr. Terry Halpin in his PhD thesis in 1989. Since then a number of books and publications have followed by Dr. Halpin and other advocates. Interestingly, Microsoft made some investment in ORM in the early 2000’s with the implementation of ORM as part of the Visual Studio for Enterprise Architects (VSEA) product. VSEA offered tool support in the form of NORMA (Natural ORM Architect), a memorable acronym. International ORM workshops are held annually, the ORM2014 workshop takes place in Italy this month.

In terms of tools support ORM2 stencils are available for both Visio and Omnigraffle.

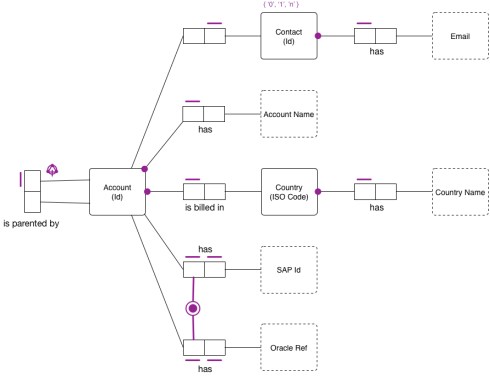

ORM Example

The technique is best described in the ORM whitepaper. I won’t attempt to replicate or paraphrase this content, instead, a very basic illustrative model is provided to give nothing more than a sense of how a conceptual model appears.

Final Thoughts

In most cases a conceptual data model can be an unnecessary overhead, however where data requirements are emergent or sufficiently complex to warrant a distinct analysis and design process, the application of object role modelling can be highly beneficial. Understanding the potential of such techniques I think is perhaps the most important aspect, a good practitioner should have a broad range of modelling techniques to call upon.

References

Official ORM Site

ORM2 Whitepaper

ORM2 Graphical Notation

Omnigraffle stencil on Graffletopia